Google Ads Enhances Asset Studio with Generative AI to Streamline Creative Workflows for Global Advertisers

Google has significantly expanded the capabilities of its Asset Studio within the Google Ads ecosystem, integrating advanced generative artificial intelligence to bridge the gap between technical ad management and creative production. As the digital advertising landscape shifts toward a "creative-first" paradigm, these AI-driven tools are designed to assist advertisers who lack the resources of professional graphic designers or video production houses. By utilizing large language models and sophisticated image-generation technology, Google is enabling businesses to generate high-quality visual content from simple text prompts, edit existing product photography into lifestyle imagery, and transform static images into engaging video assets. This evolution represents a fundamental change in how performance marketing is executed, moving away from manual asset creation toward an automated, iterative process that prioritizes speed and relevance in a highly competitive attention economy.

The core of this technological push lies in the realization that while Google’s algorithms have mastered bid strategies and searcher intent prediction, the "creative" remains the single most significant variable in campaign performance that advertisers still struggle to control. Common challenges, such as the high cost of professional photography, the technical difficulty of video editing, and the need for multiple aspect ratios to satisfy diverse platforms like YouTube, Gmail, and the Display Network, often create a bottleneck for brands. Asset Studio addresses these hurdles by providing a centralized hub where AI does the heavy lifting, allowing advertisers to focus on strategic messaging rather than technical execution.

The Evolution of AI Integration in Google Ads

The integration of generative AI into Asset Studio is not an isolated development but rather the latest milestone in a decade-long trajectory toward fully automated advertising. To understand the significance of the current tools, one must look at the chronology of Google’s AI implementation. The journey began in earnest around 2016 with the introduction of "Smart Bidding," which used machine learning to optimize for conversions in real-time. By 2018, Google introduced Responsive Search Ads (RSAs), which automatically tested different combinations of headlines and descriptions to find the best-performing creative for each user.

The most pivotal shift occurred in late 2021 with the launch of Performance Max (PMax). This campaign type signaled Google’s transition to a "black box" model, where the system manages bidding, budget, and channel placement across all of Google’s inventory. However, PMax revealed a critical weakness: the system’s effectiveness was limited by the quality of the assets provided by the advertiser. In response, Google spent 2023 and 2024 embedding generative AI directly into the asset creation flow. Today, Asset Studio serves as the creative engine for PMax and Demand Gen campaigns, ensuring that the AI has a constant supply of fresh, high-quality visual material to test and optimize.

Advanced Image Generation and Contextual Editing

The most striking feature of the updated Asset Studio is its ability to generate photorealistic images from scratch using text-to-image prompts. For instance, an e-commerce retailer selling apparel can input a descriptive prompt such as, "Create a variety of images that showcase comfortable, cushioned socks in a domestic setting, featuring a mix of close-ups and lifestyle shots." The AI generates multiple variations, which the advertiser can then refine through iterative prompting.

A key advancement in this tool is the "in-painting" and "out-painting" capability, which allows for precise edits without restarting the generation process. If an initial image contains an unwanted element—such as a coffee mug in a shot meant to focus solely on footwear—the advertiser can simply prompt the tool to "remove the mug." While early iterations of this technology occasionally struggle with atmospheric details, such as lingering steam from a removed cup, the system’s ability to refine images based on natural language feedback significantly reduces the time required for traditional photo retouching.

Beyond creating images from thin air, Asset Studio can "liven" existing product shots. This feature is particularly valuable for small businesses that have high-quality product photos on white backgrounds but lack lifestyle imagery. By uploading a static photo of a t-shirt and prompting the AI to "feature a man wearing this shirt walking on a downtown sidewalk," Asset Studio can synthesize a realistic scene that places the product in a relatable context. This transformation from a sterile product shot to a compelling lifestyle image is crucial for platforms like Instagram or Google’s Discovery feed, where "shoppability" is driven by visual storytelling.

Bridging the Video Gap: From Static to Motion

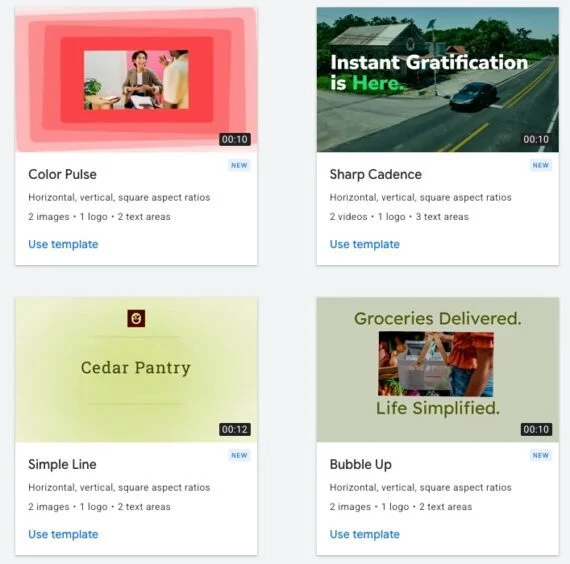

Video has become the dominant medium for consumer engagement, yet it remains the most difficult asset for many advertisers to produce. Industry data suggests that video ads on YouTube can drive up to 20% more conversions than static display ads alone. To capture this potential, Asset Studio now includes tools to convert static images into high-impact video clips.

The process is remarkably streamlined: advertisers can upload a single photo to generate a 5-second animated clip, or two photos to create a 10-second video. The AI handles the transitions, motion effects, and framing. Furthermore, Asset Studio provides a library of templates that can be customized with brand logos, specific calls to action, and key marketing messages. While these AI-generated videos may not replace high-budget cinematic productions, they fulfill a critical need for "snackable" content that fits into the fast-paced environment of YouTube Shorts and the Google Display Network.

Recognizing that consumer attention spans are shorter than ever, Google has also introduced a "Trim Video" feature. Using machine learning to identify the most salient moments in a longer video, the tool can automatically create 6-second "Bumper" ads or 10-to-15-second versions of longer commercials. This ensures that the core message is delivered before a viewer has the chance to skip the ad, a vital necessity given that 65% of viewers skip online video ads as soon as the option becomes available.

Supporting Data and Market Implications

The push toward AI-driven creative is backed by compelling performance data. Internal Google studies indicate that advertisers who use Performance Max campaigns and provide a "Good" or "Excellent" Ad Strength rating—often achieved by having a diverse range of high-quality AI assets—see an average of 18% more conversions at a similar cost per action compared to those with lower-quality asset groups.

Furthermore, the democratization of creative tools is expected to have a profound impact on the competitive landscape. Traditionally, larger brands with massive creative budgets held a distinct advantage in visual storytelling. With Asset Studio, a solo entrepreneur can now produce a visual campaign that rivals the aesthetic quality of a mid-sized agency. This shift is reflected in the broader market, where the generative AI in advertising market is projected to grow from $7.8 billion in 2023 to over $19 billion by 2028, according to industry analysts.

Industry Reactions and Professional Analysis

While the reception from the advertising community has been largely positive regarding the time-saving aspects, some industry experts urge a degree of caution. Creative directors have noted that while AI can generate "correct" images, it can sometimes lack the nuanced brand "soul" that comes from human-led design. "The tool is fantastic for filling inventory and testing variants," says one digital marketing executive. "But the most successful brands will be those that use AI to augment their unique brand identity, not replace it entirely."

There are also technical and ethical considerations. Google has addressed concerns regarding AI transparency by implementing SynthID, a digital watermarking technology developed by Google DeepMind. This embeds an imperceptible watermark into the pixels of AI-generated images, allowing for clear identification of synthetic media and helping to prevent the spread of misinformation in the advertising space.

The Future of the Creative Process in Digital Advertising

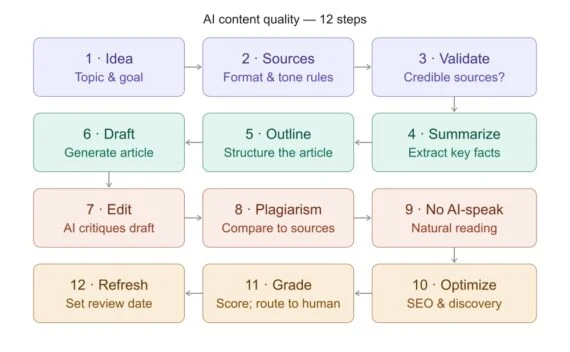

Looking ahead, the role of the digital advertiser is evolving from "creator" to "curator." The focus is shifting toward "prompt engineering" and strategic oversight. Instead of spending hours in Photoshop, advertisers will spend their time defining the parameters of the brand’s visual language and then selecting the best outputs from an AI-generated array.

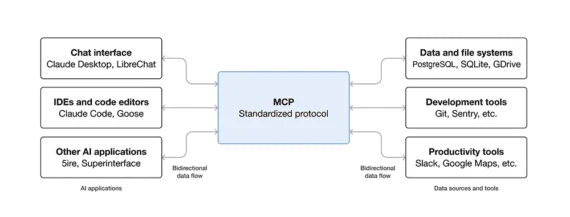

The broader implications for the industry are clear: speed, scale, and personalization are the new benchmarks for success. As Google continues to refine the Asset Studio, we can expect even deeper integration with the Gemini family of models, leading to more sophisticated video generation—perhaps even moving toward full-scale, AI-generated commercials based on a brand’s historical performance data.

In conclusion, the enhancements to Google Ads’ Asset Studio represent a significant leap forward in making high-quality advertising accessible to all. By removing the technical and financial barriers to image and video production, Google is not just selling ad space; it is providing a comprehensive creative laboratory. For advertisers, the message is clear: the future of performance is automated, and those who master the synergy between human strategy and AI-driven creativity will be the ones who thrive in the next era of digital marketing.