The Surprising Efficiency of Unsupervised Learning: How Generative Models Unlock Classification with Minimal Labels

The conventional wisdom in machine learning, particularly for tasks like image classification, has long held an implicit prerequisite: the necessity of vast quantities of meticulously labeled data. This paradigm, where each data point is painstakingly annotated by human experts, forms the bedrock of supervised learning algorithms. However, a growing body of research challenges this fundamental assumption, demonstrating that models can uncover intricate patterns and structures within data without any explicit guidance. Generative models, in particular, have emerged as powerful tools capable of organizing data into meaningful clusters during unsupervised training. When trained on image datasets, these models can naturally segregate distinct objects, categories, or even stylistic variations within their internal, abstract representations known as latent spaces. This capability raises a profound and practical question: if a model can already discern the underlying structure of data without being told what each element represents, how much explicit labeling is truly required to transform this structural understanding into a functional classifier?

This article delves into this critical question, employing a Gaussian Mixture Variational Autoencoder (GMVAE) as the primary tool for exploration. The research, building upon the foundational work of Dilokthanakul et al. (2016), investigates the efficacy of unsupervised learning in pre-structuring data for subsequent classification.

Dataset and Methodology: The EMNIST Letters Challenge

The chosen experimental ground for this research is the EMNIST Letters dataset, introduced by Cohen et al. (2017). This dataset represents a significant extension of the universally recognized MNIST dataset, which comprises handwritten digits. EMNIST Letters, by contrast, incorporates both uppercase and lowercase letters, introducing a far greater degree of ambiguity and complexity compared to the relatively distinct digits of MNIST. This increased ambiguity makes EMNIST Letters a more robust benchmark for highlighting the power and importance of probabilistic representations in discerning subtle data relationships.

It is important to note a disclaimer accompanying the research: the code and experimental setup are specifically tailored for the EMNIST and MNIST datasets and are intended for research and reproducibility purposes. Adapting this framework to other datasets would necessitate significant modifications in data preprocessing, model architecture tuning, and hyperparameter selection. The complete code and experimental details are publicly available on GitHub, fostering transparency and further investigation within the research community.

The GMVAE: Unsupervised Discovery of Data Structure

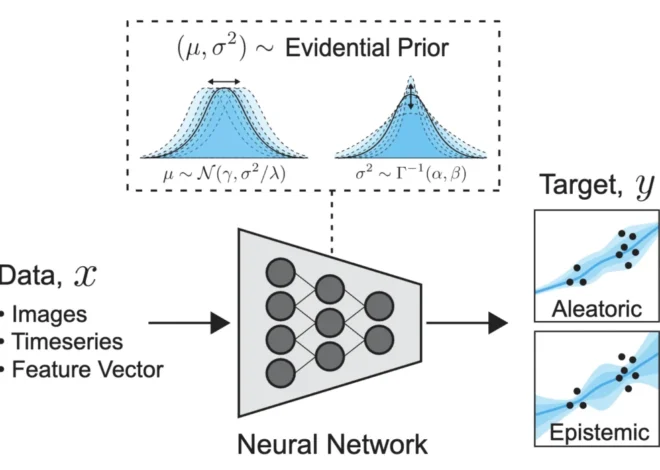

At its core, a standard Variational Autoencoder (VAE) is a generative model designed to learn a continuous latent representation of data. In this process, each input data point, denoted as , is mapped to a probability distribution, typically a multivariate normal distribution , known as the posterior distribution. This latent space aims to capture the essential features of the input data.

However, for effective clustering, a standard VAE with a simple Gaussian prior can fall short. The latent space often remains continuous, lacking the distinct, well-separated groups that are characteristic of well-defined clusters. This is precisely where the Gaussian Mixture Variational Autoencoder (GMVAE) introduces a crucial enhancement.

The GMVAE extends the VAE framework by replacing the standard Gaussian prior with a mixture of components, where is a pre-defined hyperparameter. This is achieved by introducing a new discrete latent variable, , which governs the assignment of a data point to one of the mixture components. This integration allows the model to learn a posterior distribution not just over the continuous latent features but also over these discrete clusters. Consequently, each of the components of the mixture can be interpreted as a distinct cluster within the data. In essence, GMVAEs intrinsically discover and organize data into clusters during their unsupervised training phase.

The choice of is a critical hyperparameter that balances the model’s expressivity (its ability to capture fine-grained distinctions) with its reliability (the robustness and purity of the learned clusters). In this research, was selected as a pragmatic compromise. This number is sufficiently large to capture subtle stylistic variations within each letter class (e.g., differentiating between uppercase ‘F’ and lowercase ‘f’ through distinct clusters) while remaining small enough to ensure that each learned cluster is adequately represented in the available labeled data. Even with this choice, it’s acknowledged that clusters are not perfectly pure; for instance, one cluster might predominantly represent the letter ‘T’ but also contain a notable number of ‘J’ samples, demonstrating the inherent ambiguity in visual data.

From Clusters to Classification: The Labeling Challenge

Once the GMVAE has undergone unsupervised training and effectively organized the data into clusters, each data point is associated with a probabilistic distribution over these clusters, denoted as . The critical next step is to imbue these discovered clusters with semantic meaning, i.e., to connect them to the actual classification labels. This transition from unsupervised clustering to supervised classification necessitates the use of a labeled subset of the data.

A straightforward baseline approach, often referred to as "cluster-then-label," involves first clustering the entire dataset using an unsupervised method (such as K-means or a Gaussian Mixture Model) and then assigning a definitive label to each cluster based on the majority label of the data points within that cluster from the labeled subset. This strategy relies on a "hard assignment," where each data point is definitively placed into a single cluster before being assigned a label.

In contrast, the method explored here deviates from this rigid approach. Instead of forcing each data point into a single cluster, it leverages the full posterior distribution . This allows each data point to be represented not as a member of a single discrete cluster but as a probabilistic mixture across multiple clusters. This can be viewed as a sophisticated, probabilistic generalization of the traditional cluster-then-label paradigm.

Theoretical Minimums: How Little Supervision is Truly Needed?

Theoretically, in an idealized scenario where the discovered clusters are perfectly pure (each cluster exclusively represents a single class) and equally sized, the minimum amount of labeled data required would be minimal. If one could strategically select which data points to label, then a single labeled example per cluster would suffice, totaling only labels. For the EMNIST Letters dataset with <math data-latex="boldsymbolN = 145,600 total samples and <math data-latex="boldsymbolK = 100 clusters, this would represent a mere 0.07% of the data.

However, a more realistic assumption is that labeled samples are drawn randomly from the dataset. Under this assumption, and still considering equally sized clusters, theoretical calculations can provide an approximate lower bound on the supervision needed to ensure that all clusters are represented with a chosen level of confidence. For <math data-latex="boldsymbolK = 100, this calculation suggests a minimum of approximately 0.6% labeled data is required to cover all clusters with 95% confidence. Relaxing the equal-size assumption leads to more complex inequalities, but the core takeaway remains: the theoretical minimum supervision is remarkably low.

These theoretical calculations, however, are inherently optimistic. In practice, discovered clusters are rarely perfectly pure. A single cluster might contain a mix of labels, such as ‘i’ and ‘l’, in significant proportions, complicating the direct mapping from cluster to label.

Decoding the Labels: Hard vs. Soft Approaches

With the GMVAE trained and data points associated with cluster distributions, the challenge becomes assigning accurate labels to the remaining, unlabeled data. Two primary decoding strategies are explored: hard decoding and soft decoding.

Hard Decoding: The Simplest Approach

Hard decoding follows a more direct, albeit less nuanced, path. First, each cluster is assigned a unique label by examining the labeled subset. The most frequent label among the labeled points assigned to that cluster is chosen as the cluster’s definitive label. Subsequently, for any unlabeled image , the algorithm identifies its most probable cluster, , and assigns to the label associated with that cluster, <math data-latex="boldsymbolell(chard(x))">

However, this hard decoding approach suffers from two significant drawbacks. Firstly, it discards the inherent uncertainty of the GMVAE. If the model is uncertain about which cluster an input belongs to, hesitating between several possibilities, hard decoding arbitrarily picks one, ignoring the valuable probabilistic information. Secondly, it operates under the strong, and often incorrect, assumption that each cluster is pure, meaning it corresponds to a single label.

Soft Decoding: Embracing Probabilistic Nuance

Soft decoding offers a more sophisticated and robust solution by directly addressing the limitations of hard decoding. Instead of assuming a one-to-one correspondence between clusters and labels, it utilizes the labeled subset to empirically estimate a probability vector for each label . This vector, , represents the empirical probability of a data point belonging to each of the clusters, given that its true label is <math data-latex="boldsymbolell. This can be interpreted as an empirical approximation of .

Concurrently, the GMVAE provides, for each input , its posterior probability distribution over clusters, . The soft decoding rule then assigns to the label that maximizes the similarity between the label-specific cluster distribution and the input’s cluster posterior . This is achieved by maximizing a similarity score, effectively comparing the input’s cluster distribution with the expected cluster distribution for each potential label.

This soft decision rule elegantly accounts for two crucial factors: the model’s uncertainty in assigning an input to a specific cluster and the inherent impurity of clusters, where a single cluster might be associated with multiple labels to varying degrees. It can be interpreted as a sophisticated comparison between the posterior distribution of clusters given an input () and the probabilistic association of clusters with a given label (), selecting the label whose cluster distribution most closely matches the input’s.

A Concrete Illustration: Why Soft Decoding Excels

Consider a scenario where an input image, representing the letter ‘e’, is presented to the model. The GMVAE might produce a posterior cluster distribution that is not overwhelmingly concentrated on a single cluster. Instead, it might assign significant probability mass to several clusters, some of which are strongly associated with ‘e’, while others might have a weaker association or even be predominantly linked to a different letter, say ‘c’.

In such a case, hard decoding would identify the single most probable cluster. If this cluster, despite its high probability, is predominantly associated with ‘c’ in the labeled data, the hard decoder would incorrectly classify the image as ‘c’. This happens because it discards all other probabilistic information.

Soft decoding, however, aggregates the evidence from all clusters. It calculates a weighted score for each potential label, where the weights are derived from the input’s cluster posterior probabilities and the empirically learned cluster-to-label associations. If, even with the dominant ‘c’-associated cluster, the combined evidence from other clusters strongly linked to ‘e’ outweighs the ‘c’ signal, soft decoding will correctly predict ‘e’. This highlights the crucial advantage of soft decoding: it leverages the full richness of the generative model’s probabilistic output, acknowledging and utilizing uncertainty rather than discarding it.

Practical Performance: Quantifying Label Efficiency

Moving beyond theoretical considerations, the research empirically evaluates the performance of the GMVAE-based classifier across varying amounts of labeled data. The primary objectives are to:

- Quantify the minimum number of labels required to achieve competitive classification accuracy.

- Demonstrate the superiority of soft decoding over hard decoding, especially in low-label regimes.

The GMVAE classifier, utilizing both hard and soft decoding, is compared against standard machine learning baselines: logistic regression, a Multi-Layer Perceptron (MLP), and XGBoost. The experiments involve progressively increasing the number of labeled samples and evaluating the accuracy on the remaining data. Results are presented as mean accuracy with 95% confidence intervals, averaged over five random seeds to ensure statistical robustness.

The findings are striking. Even with an extremely sparse set of labeled samples, the GMVAE-based classifier demonstrates surprisingly strong performance. Crucially, soft decoding consistently and significantly outperforms hard decoding when supervision is scarce. For instance, with as few as 73 labeled samples (meaning many clusters are likely unrepresented in the labeled set), soft decoding achieves an absolute accuracy gain of approximately 18 percentage points over hard decoding.

Furthermore, when provided with just 0.2% of the total labeled data (around 291 samples out of 145,600, translating to roughly three labeled examples per cluster), the GMVAE classifier, employing soft decoding, reaches an accuracy of 80%. In stark contrast, XGBoost, a powerful gradient boosting algorithm, requires approximately 7% of the labeled data—a staggering 35 times more supervision—to achieve a comparable performance level.

This significant disparity underscores a pivotal insight: a substantial portion of the structural information necessary for classification is already implicitly learned during the unsupervised training phase of the GMVAE. The role of labels, in this context, is not to build the feature representation from scratch but rather to interpret the rich, pre-existing structure that the model has already discovered.

Conclusion: A Paradigm Shift Towards Label-Efficient Learning

The experiments conducted with the Gaussian Mixture Variational Autoencoder trained entirely without labels demonstrate a compelling paradigm for building effective classifiers with minimal supervision. The research confirms that a classifier can achieve remarkable performance using as little as 0.2% labeled data. The fundamental observation is that unsupervised generative models, like the GMVAE, learn a significant portion of the classification structure intrinsically. Labels are not employed to construct representations anew but serve the crucial purpose of interpreting the clusters that the model has already autonomously discovered.

While a simple hard decoding strategy offers a reasonable starting point, leveraging the full posterior distribution over clusters through soft decoding yields a consistent, albeit often small, improvement in accuracy, particularly in scenarios where the model exhibits uncertainty. This approach effectively utilizes the probabilistic nuances of the generative model.

More broadly, this research points towards a promising direction for label-efficient machine learning. The core idea is that generative models can learn meaningful representations of data in an unsupervised manner, and subsequent labels are primarily used for "naming" or interpreting what has already been learned, rather than for building the foundational understanding. This paradigm shift has profound implications for fields where data labeling is costly, time-consuming, or simply infeasible, suggesting that in many real-world applications, the true bottleneck is not the lack of labeled data but the efficient utilization of unsupervised learning capabilities.

This work was conducted using a custom implementation of the GMVAE and evaluation pipeline. All experiments and findings are detailed in accompanying research materials, fostering transparency and further exploration within the machine learning community.

This work is licensed under the Creative Commons Attribution 4.0 International License. To view a copy of this license, visit https://creativecommons.org/licenses/by/4.0/