The AI Boom’s Public Sector Frontier: Navigating Constraints with Purpose-Built Small Language Models

The transformative power of artificial intelligence (AI) is no longer confined to the private sector; it is rapidly permeating every industry, including the public sector. Government institutions worldwide are facing increasing pressure to embrace AI technologies to enhance efficiency, improve services, and address complex societal challenges. However, the path to AI adoption for public sector organizations is fraught with unique challenges, distinct from those faced by their commercial counterparts. Chief among these are stringent requirements around data security, governance, and operational resilience. In this intricate landscape, purpose-built Small Language Models (SLMs) are emerging as a promising and practical solution, offering a viable route to operationalizing AI within the demanding confines of government operations.

The urgency for public sector AI adoption is underscored by significant apprehension regarding data security. A comprehensive study by Capgemini revealed that a striking 79 percent of public sector executives globally express reservations about the data security implications of AI. This concern is not unfounded, given the exceptionally sensitive nature of government data and the robust legal and ethical obligations surrounding its use. Han Xiao, vice president of AI at Elastic, articulates this critical constraint: "Government agencies must be very restricted about what kind of data they send to the network. This sets a lot of boundaries on how they think about and manage their data." This fundamental need for absolute control over sensitive information profoundly influences how AI solutions can be conceived, deployed, and managed within public sector environments.

Unique Operational Challenges in the Public Sector

Unlike the private sector, which often operates with assumptions of ubiquitous cloud connectivity, centralized infrastructure, a degree of model transparency, and fluid data movement, public sector entities grapple with a fundamentally different operational reality. For many government institutions, accepting these standard private sector assumptions could range from being inadvisable to outright impossible.

Government agencies are mandated to ensure that their data remains under their direct control, that information can be rigorously checked and verified, and that operational disruptions are minimized to an absolute degree. Furthermore, these organizations frequently operate in environments characterized by limited, unreliable, or even absent internet connectivity. These compounding complexities act as significant barriers, preventing many promising AI pilot projects within the public sector from advancing beyond the experimental phase.

"Many people undervalue the operating challenge of AI," Xiao observes. "The public sector needs AI to perform reliably on all kinds of data, and then to be able to grow without breaking. Continuity of operations is often underestimated." This sentiment is echoed by findings from an Elastic survey of public sector leaders, which indicated that 65 percent of them struggle with the continuous use of data in real time and at scale.

Infrastructure limitations further exacerbate these challenges. Government organizations often face difficulties in acquiring the specialized hardware, such as Graphics Processing Units (GPUs), which are essential for both training and running complex AI models. As Xiao points out, "Government doesn’t often purchase GPUs, unlike the private sector—they’re not used to managing GPU infrastructure. So accessing a GPU to run the model is a bottleneck for much of the public sector." This reliance on hardware that is not typically part of their procurement cycles presents a significant hurdle.

The Rise of Small Language Models (SLMs)

The stringent, non-negotiable requirements inherent in public sector operations render traditional, large-scale language models (LLMs) often untenable. LLMs, with their vast parameter counts and extensive computational demands, typically require robust cloud infrastructure and continuous connectivity, making them unsuitable for environments with limited resources or strict data sovereignty mandates.

In contrast, Small Language Models (SLMs) offer a more practical and adaptable solution. These specialized AI models, typically possessing billions rather than hundreds of billions of parameters, are significantly less computationally intensive. Crucially, SLMs can be housed locally, on-premises, or on secure government networks, thereby granting agencies enhanced security and greater control over their data.

The notion that only the largest models can deliver significant value is being challenged. An empirical study exploring the capabilities of SLMs found that they performed as well as, or in some cases, even better than their LLM counterparts on specific tasks. This suggests that the public sector does not necessarily need to pursue the development of ever-larger models hosted in distant, centralized locations. SLMs enable sensitive information to be utilized effectively and efficiently, circumventing the substantial operational complexities associated with managing massive, cloud-dependent AI systems.

Xiao aptly illustrates this point: "It is easy to use ChatGPT to do proofreading. It’s very difficult to run your own large language models just as smoothly in an environment with no network access." This highlights the critical distinction between the convenience of public, general-purpose LLMs and the demanding, secure operational needs of government.

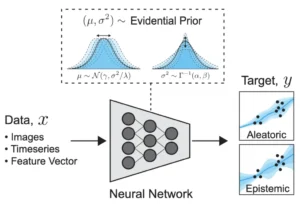

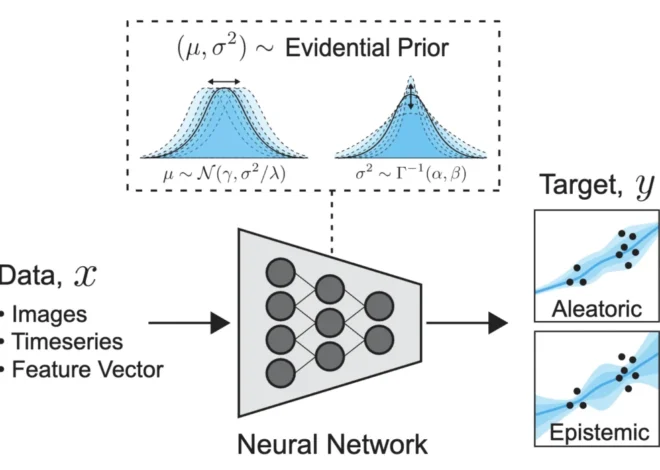

SLMs are designed to be purpose-built for the specific needs of the department or agency that will employ them. This specialization ensures that the models are tailored to the unique data sets and operational workflows. Data is stored securely outside the model itself and is only accessed when explicitly queried. This approach, combined with carefully engineered prompts, ensures that only the most relevant information is retrieved, leading to more accurate and contextually appropriate responses. By leveraging techniques such as smart retrieval, vector search, and verifiable source grounding, AI systems can be constructed that precisely cater to the distinct requirements of public sector operations.

This strategic shift signifies a pivotal moment in public sector AI adoption, potentially moving towards bringing the AI tool to the data, rather than the more conventional approach of sending sensitive data out into the cloud. Industry analysts are already predicting this trend. Gartner, for instance, forecasts that by 2027, organizations will be using small, task-specific AI models three times more frequently than general-purpose large language models. This prediction underscores the growing recognition of SLMs as a more practical and scalable AI solution for a wide array of organizational needs, particularly within regulated sectors.

Revolutionizing Public Sector Search and Data Management

When many in the public sector consider AI, their immediate thought might be of conversational chatbots like ChatGPT. However, as Xiao emphasizes, the potential for AI in government extends far beyond such applications. "AI can revolutionize how the government searches and manages the large amounts of data they have," he states.

One of the most immediate and impactful opportunities for AI in the public sector lies in dramatically improving search capabilities. Like many organizations, government entities possess vast repositories of unstructured data, encompassing technical reports, procurement documents, meeting minutes, invoices, and countless other critical records. Traditional search methods often struggle to efficiently extract meaningful insights from this data. Modern AI, however, can deliver results sourced from a diverse range of media, including readable PDFs, scanned documents, images, spreadsheets, and even audio recordings. Furthermore, these systems can process information in multiple languages, breaking down communication barriers.

SLM-powered systems can index this disparate data to provide tailored responses, assist in drafting complex texts in any language, and, crucially, ensure that all outputs are legally compliant. "The public sector has a lot of data, and they don’t always know how to use this data. They don’t know what the possibilities are," Xiao observes, highlighting a common challenge that AI can help overcome.

Beyond mere retrieval, AI can empower government employees to interpret the data they access with unprecedented depth. "Today’s AI can provide you with a completely new view of how to harness that data," Xiao explains. A well-trained SLM can be instrumental in interpreting complex legal norms, extracting nuanced insights from public consultations, supporting data-driven executive decision-making, and enhancing public access to essential services and administrative information. These capabilities can lead to profound improvements in the efficiency and effectiveness of public sector operations.

The Promise of Efficiency and Trust with SLMs

Focusing on SLMs fundamentally shifts the conversation from model comprehensiveness to operational efficiency. LLMs, with their extensive computational demands, incur significant performance and cost overheads, often requiring specialized hardware that many public entities find prohibitively expensive. While SLMs do necessitate some capital expenditure, they are considerably less resource-intensive than LLMs. This reduced resource footprint translates into lower costs and a diminished environmental impact, aligning with growing sustainability goals within government.

Furthermore, public sector agencies are frequently subject to stringent audit requirements. SLM algorithms can be meticulously documented and certified for transparency, providing auditable trails and clear explanations of their decision-making processes. This transparency is crucial for building trust and ensuring accountability. For countries, particularly in Europe, with robust privacy regulations like the General Data Protection Regulation (GDPR), SLMs can be specifically designed and implemented to ensure compliance with these data protection mandates.

The use of tailored training data is paramount to producing more targeted results, thereby reducing the incidence of errors, bias, and the phenomenon of "hallucinations" – where AI generates plausible but incorrect information. As Xiao notes, "Large language models generate text based on what they were trained on, so there is a cut-off date when they were trained. If you ask about anything after that, it will hallucinate. We can solve this by forcing the model to work from verified sources." This emphasis on verifiable sources, combined with local data storage, not only enhances accuracy but also minimizes risks by keeping sensitive data on local servers or even on specific devices. This is not about isolation but about achieving strategic autonomy, fostering trust, resilience, and relevance in AI deployments.

By prioritizing task-specific models designed for environments that process data locally, and by implementing continuous monitoring of performance and impact, public sector organizations can cultivate enduring AI capabilities that reliably support real-world decision-making. Xiao offers a practical piece of advice for those embarking on this journey: "Do not start with a chatbot; start with search. Much of what we think of as AI intelligence is really about finding the right information." This foundational approach, leveraging AI to master the challenge of information retrieval, lays the groundwork for more sophisticated AI applications and unlocks the immense potential of data within government operations. The future of AI in the public sector is not about replicating the vastness of LLMs but about harnessing the precision and control offered by purpose-built SLMs.