Building Trust in the AI Era with Privacy-Led UX

The practice of privacy-led user experience (UX) is a design philosophy that treats transparency around data collection and usage as an integral part of the customer relationship. This approach, currently an undertapped opportunity in digital marketing, reframes user consent not as a mere tick-box compliance exercise, but rather as the inaugural overture in an ongoing and meaningful customer dialogue. For companies that successfully implement privacy-led UX, the rewards extend beyond mere consent rates, fostering a more intangible, valuable, and durable asset: consumer trust.

This burgeoning field has only recently garnered significant attention within the enterprise landscape. Adelina Peltea, Chief Marketing Officer at Usercentrics, has observed a palpable shift in industry sentiment. "Even just a few years ago, this space was viewed more as a trade-off between growth and compliance," Peltea remarked. "However, as the market has matured, there’s been a greater focus on how to strategically tie well-designed privacy experiences to tangible business growth." This evolution suggests a growing recognition that prioritizing user privacy can, in fact, be a powerful driver of commercial success.

The underlying premise of privacy-led UX is that well-designed, value-forward consent experiences consistently outperform initial projections. These critical touchpoints for privacy-led UX encompass a range of user-facing elements, including sophisticated consent management platforms, clearly articulated terms and conditions, accessible privacy policies, efficient data subject access request (DSAR) tools, and, with increasing prominence, transparent disclosures regarding the use of artificial intelligence (AI) in data processing.

A comprehensive report, "Building Trust in the AI Era with Privacy-Led UX," published by MIT Technology Review Insights in collaboration with Usercentrics, delves into the intricate relationship between data transparency and customer trust. The report meticulously examines how this burgeoning trust can subsequently bolster business performance and, crucially, how organizations can sustain this hard-won trust amidst the escalating complexity introduced by AI systems into consent processes.

The Evolving Landscape of User Consent

Historically, the digital marketing and technology sectors have grappled with a fundamental tension: the drive for personalization and data-driven insights versus the imperative to protect user privacy. Early approaches to data consent often leaned towards a perfunctory, compliance-driven model. This typically involved lengthy, jargon-filled privacy policies and consent banners that users were encouraged to "accept all" with minimal engagement. Such practices, while technically fulfilling regulatory requirements, did little to build genuine understanding or trust with users.

The advent of stringent data privacy regulations, such as the General Data Protection Regulation (GDPR) in Europe and the California Consumer Privacy Act (CCPA) in the United States, marked a significant turning point. These regulations not only imposed legal obligations but also empowered consumers with greater control over their personal data. This regulatory shift catalyzed a re-evaluation of how companies interact with users regarding their data.

The period between 2016 and 2018, leading up to the GDPR’s implementation, saw a surge in companies developing basic consent mechanisms. However, the focus was predominantly on avoiding fines rather than on cultivating user relationships. As Peltea notes, the market sentiment was characterized by a "trade-off between growth and compliance." This meant that many businesses viewed privacy as a hurdle to overcome, a cost center that detracted from their core marketing objectives.

From Compliance to Connection: The Rise of Privacy-Led UX

The narrative began to shift in the subsequent years. As consumers became more aware of their data rights and as the capabilities of AI in data analysis became more sophisticated, the limitations of the old model became apparent. Companies started to realize that a purely compliance-driven approach was not only insufficient but also potentially detrimental to their long-term brand reputation and customer loyalty.

Privacy-led UX emerged as a strategic response to this evolving landscape. It recognizes that user consent is not a singular event but rather the beginning of a continuous dialogue. By being transparent about what data is collected, why it is collected, and how it will be used, companies can empower users to make informed decisions. This empowerment fosters a sense of agency and respect, which are foundational elements of trust.

The report by MIT Technology Review Insights and Usercentrics highlights that this strategic shift is yielding tangible results. Companies that embrace privacy-led UX are finding that their consent experiences not only achieve higher opt-in rates than initially projected but also lead to more engaged and loyal customer bases. This is because users are more likely to consent to data collection when they understand its value and when they feel their privacy is respected.

Key Findings: Data Transparency as a Trust Catalyst

The research underpinning the report underscores several critical findings:

- Data Transparency Cultivates Consumer Trust: The study provides empirical evidence that clear and accessible information about data collection and usage directly correlates with increased consumer trust. When users feel informed and in control, they are more likely to develop a positive perception of a brand. This is particularly relevant in an era where data breaches and misuse are frequent concerns.

- Trust Drives Business Performance: The report elucidates the direct link between consumer trust and improved business outcomes. This includes higher customer retention rates, increased lifetime value, greater brand advocacy, and ultimately, enhanced revenue. Consumers who trust a brand are more likely to make repeat purchases, recommend it to others, and be more forgiving of minor service issues.

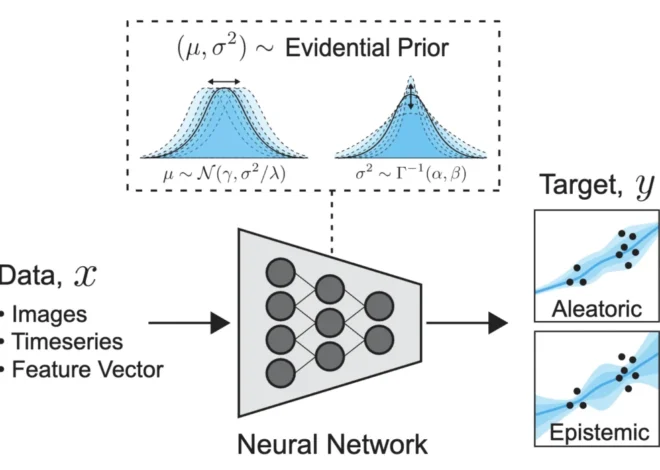

- Navigating AI’s Complexity with Transparency: A significant portion of the report addresses the growing challenge of maintaining trust as AI systems become more integrated into data processing and decision-making. AI’s ability to process vast datasets and make predictive inferences can be opaque to the average user. Privacy-led UX offers a framework for disclosing AI’s role in data usage, explaining its purpose, and ensuring that its application aligns with user expectations and ethical considerations. This could involve clearly stating when AI is used for personalization, fraud detection, or content recommendation, and providing options for users to understand or influence these AI-driven processes.

Practical Applications of Privacy-Led UX

The implementation of privacy-led UX involves a multi-faceted approach across various customer touchpoints. These include:

- Consent Management Platforms (CMPs): Modern CMPs go beyond simple cookie banners. They offer granular control, allowing users to choose precisely which types of data they are comfortable sharing and for what purposes. These platforms are designed to be intuitive and user-friendly, presenting information in a clear and concise manner.

- Terms and Conditions and Privacy Policies: These foundational documents are being reimagined. Instead of dense legalistic prose, there’s a move towards plain language summaries, interactive elements, and readily accessible FAQs that address common user concerns. The goal is to make these essential legal documents understandable and actionable for the average user.

- Data Subject Access Request (DSAR) Tools: Empowering users to request access to their data, understand how it’s being used, and request its deletion or correction is a cornerstone of privacy-led UX. Efficient and user-friendly DSAR tools not only fulfill legal obligations but also demonstrate a company’s commitment to data governance and user rights.

- AI Data Use Disclosures: As AI becomes more prevalent, transparently disclosing its role in data utilization is paramount. This could involve explaining how AI algorithms are used to personalize experiences, make recommendations, or analyze user behavior. Providing users with insights into these processes, and potentially opting out of certain AI-driven applications, is crucial for maintaining trust.

The Future Outlook: Trust as a Competitive Differentiator

The insights gleaned from the MIT Technology Review Insights and Usercentrics report suggest a fundamental shift in how businesses will compete in the digital age. In a market saturated with similar products and services, consumer trust is emerging as a powerful and sustainable differentiator. Companies that proactively embrace privacy-led UX are not just complying with regulations; they are investing in their brand reputation and building deeper, more resilient relationships with their customers.

The report is available for download, offering a deeper dive into the research methodologies, data analysis, and strategic recommendations for organizations looking to navigate the complexities of data privacy and AI in the pursuit of enhanced consumer trust.

This content was produced by Insights, the custom content arm of MIT Technology Review. It was not written by MIT Technology Review’s editorial staff. It was researched, designed, and written by human writers, editors, analysts, and illustrators. This includes the writing of surveys and collection of data for surveys. AI tools that may have been used were limited to secondary production processes that passed thorough human review.