The Evolution of Digital Discovery: Why Traditional Search Engine Optimization Remains the Critical Foundation for Visibility in the Generative AI Era

The rapid proliferation of large language models and generative AI platforms has fundamentally transformed the landscape of digital information retrieval, yet the underlying mechanics of visibility remain tethered to the decades-old principles of search engine optimization. As platforms such as ChatGPT, Perplexity, and Google Gemini become primary interfaces for consumer research, a common misconception has emerged that traditional SEO is becoming obsolete. On the contrary, empirical evidence and architectural analysis suggest that these generative engines rely heavily on established search indexes to inform their outputs. Because large language models (LLMs) frequently query search engines like Google and Bing to verify facts, research current events, and source product recommendations, pages that fail to rank in traditional search results remain effectively invisible to the AI models currently reshaping the internet.

The Convergence of Search and Synthesis

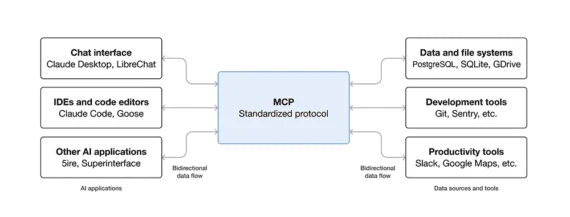

The integration of generative AI into search—a process often referred to as Generative Engine Optimization (GEO)—is not a departure from SEO but an evolution of it. When a user enters a complex prompt into an AI interface, the model does not merely rely on its pre-trained weights, which may be months or years out of date. Instead, it utilizes a process known as Retrieval-Augmented Generation (RAG). In this framework, the AI identifies the user’s intent, executes a real-time search query against a traditional index, retrieves high-ranking snippets, and synthesizes those snippets into a coherent answer. Consequently, if a merchant’s website is not optimized for traditional search crawlers, it cannot be "retrieved," and therefore cannot be "augmented" into the AI’s final response.

This dependency creates a high-stakes environment for ecommerce brands and content creators. If a site lacks the foundational SEO elements required to rank on the first page of Google, the likelihood of that site being cited by an LLM drops toward zero. For businesses, this means that the visibility once sought for the sake of a "blue link" click is now equally necessary to secure a mention in an AI-generated summary.

A Chronology of the Generative Shift

To understand the current state of AI-driven discovery, one must look at the timeline of its integration into the search ecosystem.

- November 2022: The launch of ChatGPT by OpenAI introduces the public to conversational AI, sparking immediate concerns regarding the future of the traditional web traffic model.

- February 2023: Microsoft integrates GPT-4 into Bing, creating the first mainstream "AI-powered search engine." This marks the first time a major player explicitly links LLM output to live web citations.

- May 2023: Google announces the Search Generative Experience (SGE) at its I/O conference, signaling that the world’s largest search engine would begin prioritizing AI-synthesized answers at the top of the Search Engine Results Page (SERP).

- Late 2023: Platforms like Perplexity AI gain significant market share by positioning themselves as "answer engines" rather than search engines, relying entirely on real-time web scraping and synthesis.

- May 2024: Google rebrands SGE to "AI Overviews" and rolls it out to hundreds of millions of users, cementing the reality that search and generative AI are now a single, unified experience.

Throughout this timeline, the constant factor has been the source material: the open web. AI models have not replaced the need for high-quality, indexable content; they have simply changed how that content is consumed and credited.

Strategic Keyword Research in an Unpredictable Prompt Environment

In the traditional SEO era, keyword research was a data-driven science. Tools provided precise metrics on monthly search volumes, competition levels, and cost-per-click. In the era of generative AI, the landscape is more opaque. Currently, AI platforms provide no "prompt data" to creators. We do not have a centralized database showing exactly what users are asking ChatGPT or how those queries map to specific brand mentions.

Despite this lack of transparency, traditional keyword research remains the most reliable proxy for consumer intent. Third-party SEO tools that categorize keywords by intent—informational, navigational, commercial, and transactional—offer a roadmap for how to structure content. While a user might enter a 50-word prompt into an AI, that prompt is still built upon core "keyword gaps" that identify what information is missing from the digital ecosystem.

Marketing analysts suggest that as prompts become longer and more conversational, "long-tail" keyword optimization becomes even more critical. AI models are particularly adept at answering specific, nuanced questions. By targeting these long-tail queries through traditional research, brands can ensure their content aligns with the specific data points an LLM looks for when synthesizing a complex answer.

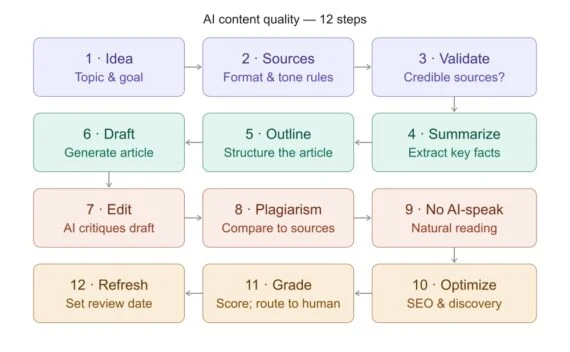

Content Optimization: Moving Beyond Traffic to Authority

The role of content has shifted from a mere traffic-driver to a source of "truth" for AI models. The most effective ecommerce content today does more than list product features; it explains how those products solve specific problems. This "problem-solution" framework is exactly what LLMs look for when a user asks for a recommendation.

There is a growing debate within the industry regarding the value of "bottom-of-the-funnel" content. Some "GEO experts" suggest focusing exclusively on queries that lead directly to a purchase. However, data suggests this is a narrow strategy. If a brand only provides transactional data, it misses the opportunity to be cited during the "research phase" of the consumer journey.

Even if an LLM summarizes a merchant’s content without providing a direct link back to the site—a phenomenon known as "zero-click" search—the brand still benefits from being part of the model’s "trusted solution set." Over time, as a brand is consistently cited as a solution provider, the model’s internal weights may begin to favor that brand in future, un-cited recommendations. Therefore, content must be optimized for the entire buying journey, ensuring that search and LLM bots find the site relevant at every stage of consumer inquiry.

Technical Infrastructure: Site Architecture for the Algorithmic Crawler

Technical SEO remains the silent engine of AI visibility. Site architecture—specifically a horizontal structure where pages are not buried deep within subdirectories—ensures that bots can crawl and index content efficiently. If a bot cannot find a page, the LLM cannot learn from it.

Internal linking is another critical factor. These links provide a map of topical authority, helping LLMs understand the relationship between different products and categories. A well-optimized site navigation should be:

- Intuitive: Logical flows that mimic the user’s research process.

- Crawlable: Using clean HTML rather than heavy JavaScript that might obscure content from simpler bots.

- Structured: Utilizing Schema markup to provide explicit metadata about products, reviews, and prices.

By providing clear, structured data, a business makes it easier for an LLM to correctly place its products within the model’s training data and retrieval systems.

The Mystery of Authority: Link Building and Co-Citation in LLMs

One of the most significant unknowns in the GEO space is how AI models use authority signals like backlinks. While Google’s reliance on PageRank is well-documented, the extent to which Gemini or ChatGPT uses link profiles is still a matter of industry speculation. However, many experts believe that LLMs use PageRank—or a similar authority metric—at least indirectly.

In this context, traditional link building takes on a new dimension. It is no longer just about passing "link juice"; it is about "co-citation." If a brand is frequently mentioned alongside top-tier competitors or within reputable industry publications, the AI begins to associate that brand with a specific category of expertise. This is achieved through:

- Journalist Outreach: Earning mentions in high-authority news outlets.

- Expert Quotes: Positioning company leadership as subject matter experts who are cited across the web.

- Social Connectivity: Building a presence on platforms where AI bots often scrape for real-time sentiment and trends.

Broader Impact and Implications

The shift toward AI-integrated search represents a double-edged sword for the digital economy. On one hand, it offers a more personalized and efficient experience for the consumer. On the other, it threatens the traditional "click-through" business model that many publishers and merchants rely on.

Data from recent SEO studies indicates that while total search volume is holding steady, the click-through rate (CTR) for informational queries is declining as AI Overviews provide the answers directly on the SERP. This makes the "mention" as valuable as the "click." If a brand is not mentioned in the AI summary, it effectively does not exist for that user.

The implication for the future is clear: SEO is not dying; it is becoming more foundational. Success in the age of GEO is defined by visibility and authority rather than just traffic. Without the rigorous application of traditional SEO tactics—keyword research, content optimization, technical excellence, and authority building—a website’s chances of being discovered by an LLM are nearly non-existent. As generative AI continues to mature, the winners will be those who recognize that the "new" way of searching is still powered by the "old" way of organizing the world’s information.