The Dawn of Practical Robotics: How AI is Reshaping the Future of Humanoid Machines

For decades, the field of robotics existed in a curious paradox: researchers harbored grand ambitions of creating machines that could rival the complexity and adaptability of the human body, yet their practical achievements were often confined to specialized, industrial applications. The vision of sophisticated, C-3PO-like assistants capable of navigating the world and interacting seamlessly with humans remained largely in the realm of science fiction, while the tangible results often manifested as the utilitarian Roomba or the assembly-line arm. This historical gap between aspiration and reality led to a considerable degree of skepticism within Silicon Valley, a reluctance to invest heavily in the promise of truly helpful, general-purpose robots. However, a fundamental shift in how machines learn and interact with their environment has dramatically altered this landscape, unleashing a torrent of investment and renewed optimism.

The most striking indicator of this transformation is the surge in funding. In 2025 alone, companies and investors poured an unprecedented $6.1 billion into the development of humanoid robots, a staggering fourfold increase from the $1.5 billion invested in 2024. This dramatic influx of capital is not a mere flicker of enthusiasm; it is a direct consequence of a profound revolution in machine learning, particularly in the way robots are being trained to perceive, understand, and act within the physical world.

The Paradigm Shift: From Explicit Rules to Adaptive Learning

Historically, the development of robotic capabilities relied on an exhaustive, and often overwhelming, process of explicit programming. Consider the task of a robot folding clothes. The traditional approach would involve meticulously crafting a vast set of rules: determining the tensile strength of various fabrics, precisely identifying garment seams, calculating exact folding distances, and devising algorithms to account for every possible orientation or wrinkle. This "craft of robotics" demanded an almost omniscient anticipation of every conceivable scenario, a monumental undertaking that quickly became intractable as the complexity of tasks increased.

The turning point arrived around 2015 with the burgeoning adoption of machine learning techniques. Instead of hand-coding every instruction, researchers began to leverage digital simulations. In these virtual environments, robotic arms could be programmed to attempt tasks, receiving positive reinforcement for success and negative feedback for failure. Through millions of iterative trials and errors, akin to how artificial intelligence mastered complex games like Go, these systems learned to optimize their actions. This trial-and-error approach, driven by reward signals, allowed robots to develop nuanced strategies without explicit human instruction for every nuance.

The LLM Catalyst: Language, Perception, and Action Converge

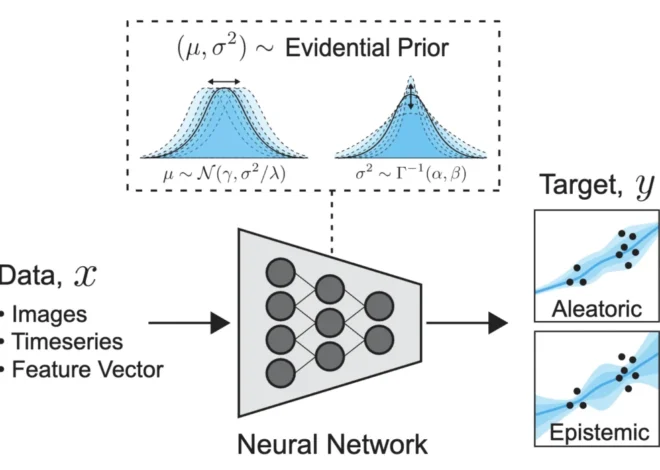

The widespread public introduction of large language models (LLMs), epitomized by ChatGPT in 2022, acted as a significant catalyst for the current boom in robotics. LLMs revolutionized natural language processing not through pre-scripted responses, but by learning to predict the most probable next word in a sequence, based on massive datasets of text. This paradigm, which demonstrated an unprecedented ability to generate coherent and contextually relevant language, soon found its parallel in robotics.

Similar AI models were adapted to ingest and process a broader spectrum of data, including visual information from cameras, sensory inputs, and the precise joint positions of a robot. These models could then predict the optimal next action, translating this understanding into a rapid stream of motor commands, often dozens per second. This conceptual leap—from meticulously programmed actions to AI models that learn from vast quantities of multimodal data—proved to be remarkably versatile. It unlocked new possibilities for robots to engage in complex tasks, navigate unpredictable environments, and even interact with humans in more naturalistic ways. This shift, coupled with innovative approaches like deploying robots in real-world settings to facilitate continuous learning, has rekindled the ambitious dreams of early roboticists.

A Timeline of Innovation: Key Milestones in Practical Robotics

The journey from hesitant beginnings to the current investment surge is marked by several pivotal developments and companies that have pushed the boundaries of what’s possible:

2014: Jibo – The Early Social Robot

Long before the advent of sophisticated LLMs, Cynthia Breazeal, an MIT robotics researcher, unveiled Jibo in 2014. This armless, legless, and faceless robot, resembling a lamp, was envisioned as a social companion for families. Its initial crowdfunding campaign successfully raised $3.7 million, with early preorders priced at $749. Jibo could introduce itself and perform simple entertainment tasks like dancing, embodying the early aspirations for embodied AI assistants capable of handling scheduling, emails, and storytelling.

While Jibo garnered a dedicated user base, its commercial trajectory ultimately faltered, leading to the company’s closure in 2019. In retrospect, Jibo’s limitations were significantly tied to its nascent language processing capabilities. Competing against the increasingly sophisticated voice assistants of Apple (Siri) and Amazon (Alexa), Jibo’s interactions were heavily reliant on scripted responses. These pre-approved snippets, while sometimes charming, often felt repetitive and inherently robotic, a significant hurdle for a machine designed to foster social connection. The subsequent revolution in AI-generated language has dramatically elevated the potential for such social robots, though it also introduces new challenges related to AI’s capacity for unpredictable or inappropriate outputs, as seen in some AI toys that have exhibited concerning conversational tendencies.

2018: OpenAI’s Dactyl – Mastering the Physical World Through Simulation

By 2018, a significant portion of the robotics research community had shifted away from rule-based systems towards trial-and-error learning. OpenAI embarked on an ambitious project with Dactyl, a robotic hand designed to manipulate objects. The initial training took place entirely in a virtual simulation, where Dactyl was tasked with manipulating palm-sized cubes, often with specific instructions like "Rotate the cube so the red side with the letter O faces upward."

The critical challenge, however, was bridging the gap between the simulated environment and the complexities of the real world. Subtle differences—such as variations in color perception, the elasticity of robotic fingertips, or the friction of surfaces—could cause a simulated program to fail when transferred to a physical robot. OpenAI’s solution was "domain randomization," a technique involving the creation of millions of slightly varied simulated worlds. By exposing Dactyl to a wide array of random environmental conditions (varying friction, lighting, and colors), the model became more robust and adaptable to real-world variations. This approach proved successful, enabling Dactyl to learn to solve Rubik’s Cubes, albeit with a success rate that varied depending on the scramble’s complexity.

Despite these advancements, the inherent limitations of simulation meant it played a less dominant role in subsequent robotics development. OpenAI initially shuttered its robotics division in 2021 but has since revived it, reportedly with a renewed focus on humanoid robots, indicating the enduring appeal of physical manipulation capabilities.

2022-2025: Google DeepMind’s RT Series – Bridging Vision, Language, and Action

Google’s robotics team, under the umbrella of DeepMind, made significant strides in developing foundational models for robotics. Around 2022, their RT-1 (Robotic Transformer 1) model was trained on extensive human demonstrations, cataloging approximately 700 distinct tasks, from picking up bags of chips to opening jars. RT-1 received inputs related to the robot’s visual perception and its own kinematic state, along with instructions, and translated these into motor commands. It demonstrated impressive performance, successfully executing 97% of tasks it had previously encountered and achieving 76% success on novel instructions.

The subsequent iteration, RT-2, released the following year, marked a significant leap by integrating general internet image data into its training regimen, mirroring the progress in vision-language models. This broad training allowed RT-2 to interpret objects and their spatial relationships within a scene with greater sophistication. Kanishka Rao, a roboticist at Google DeepMind, highlighted the expanded capabilities, noting that the model could now understand commands like "Put the Coke can near the picture of Taylor Swift." By 2025, Google DeepMind further fused these capabilities with the release of a Gemini Robotics model, enhancing its ability to comprehend and act upon natural language commands, bringing robots closer to intuitive human interaction.

2017-Present: Covariant – Collaborative Robotics for Industrial Efficiency

Emerging from a spin-off of OpenAI’s early robotics efforts in 2017, Covariant focused on a pragmatic goal: developing robotic arms for warehouse operations. Their approach involved building a platform based on foundational models, similar in principle to Google’s RT series, and deploying it in real-world logistics environments. Covariant treated these deployments as crucial data collection pipelines, continuously feeding information back to refine their AI models.

By 2024, Covariant introduced RFM-1 (Robotics Foundation Model 1), a system designed to interact with human operators as if it were a coworker. For instance, after being shown multiple sleeves of tennis balls, the robot could be instructed to move each sleeve to a designated area. Crucially, RFM-1 could also exhibit adaptive behavior, such as predicting grip challenges and proactively seeking advice on the most suitable suction cups. This level of interaction, deployed at scale, represented a significant advancement in industrial robotics.

Despite its sophistication, the system was not infallible. A demonstration in March 2024, involving kitchen items, revealed limitations when the robot was tasked with returning a banana to its original location. The robot, after several incorrect attempts with other items, eventually completed the task. Co-founder Peter Chen acknowledged that the model "doesn’t understand the new concept" of retracing steps, highlighting that performance is contingent on the availability of robust training data. This underscores a persistent challenge in robotics: ensuring reliability in environments with limited or novel training data. The expertise of Chen and fellow founder Pieter Abbeel was subsequently recognized with their hiring by Amazon, a company that operates an extensive network of warehouses and is now licensing Covariant’s technology.

Present: Agility Robotics’ Digit – Humanoids Enter the Workforce

The current wave of investment in robotics startups is heavily directed towards humanoid robots, machines designed to integrate seamlessly into human workspaces without the need for extensive infrastructure modifications. While the concept is compelling, practical implementation has often been limited to pilot programs and test zones.

However, Agility Robotics’ humanoid robot, Digit, is breaking this mold. With a design prioritizing function over anthropomorphic aesthetics—featuring exposed joints and a distinct, non-humanoid head—Digit is being deployed in real-world logistics operations. Companies like Amazon, Toyota, and GXO (a logistics provider for major brands) are utilizing Digit to perform essential tasks such as moving and stacking shipping totes. This widespread adoption by major corporations signals a transition from novelty to demonstrable cost savings.

While Digit represents a significant step forward, it still has limitations. Its lifting capacity is currently capped at 35 pounds, and increasing its strength necessitates a heavier battery, leading to more frequent recharging. Furthermore, evolving safety standards for humanoids, designed for mobility and proximity to people, necessitate stricter regulations than those applied to traditional industrial robots.

Digit’s development illustrates that the current revolution in robot training is not converging on a single methodology. Agility Robotics, for instance, employs simulation techniques akin to those used by OpenAI for its robotic hand, while also integrating Google’s Gemini models to enhance environmental adaptability. This diverse application of advanced AI techniques, learned through a decade of experimentation, has finally propelled the industry from conceptualization to tangible, large-scale implementation.

Broader Implications and the Road Ahead

The substantial increase in investment and the rapid advancements in AI-driven robotics signal a paradigm shift with far-reaching implications. The prospect of robots capable of performing complex tasks in dynamic environments, interacting with humans naturally, and assisting in areas like elder care, disaster relief, or hazardous material handling is moving closer to reality.

However, this progress is not without its challenges. The ethical considerations surrounding AI’s potential for misuse, the societal impact of automation on employment, and the need for robust safety protocols for increasingly autonomous machines will require careful consideration and proactive regulation. The "dream big, build small" era of robotics is clearly over. The convergence of sophisticated AI, particularly LLMs, with practical engineering has unlocked a new era of ambition, where the focus is on building robots that are not only intelligent and adaptable but also truly helpful and integrated into the fabric of our daily lives and industries. The next decade promises to be a period of unprecedented innovation and transformation as these advanced machines begin to fulfill their long-held potential.