The 2026 AI Index Report Reveals a Rapidly Evolving Landscape Facing Significant Challenges

The artificial intelligence landscape, often characterized by a cacophony of breathless hype and dire warnings, is receiving a comprehensive, data-driven assessment from Stanford University’s Institute for Human-Centered Artificial Intelligence (HAI). The release of the 2026 AI Index, the annual report card on the state of AI, aims to cut through the noise and provide a clearer picture of the technology’s trajectory, adoption, and impact. This year’s report underscores a period of unprecedented acceleration in AI development and deployment, accompanied by burgeoning economic investment, but also significant environmental, ethical, and regulatory challenges.

Unprecedented Pace of Development and Adoption

Contrary to some predictions of an impending plateau in AI capabilities, the 2026 AI Index asserts that leading AI models continue to exhibit remarkable improvements. This rapid advancement is not confined to research labs; AI adoption rates are outpacing those of transformative technologies like the personal computer and the internet. This swift integration into various sectors is fueling a revenue boom for AI companies, reminiscent of previous technology gold rushes. However, this growth is accompanied by colossal expenditures, with companies investing hundreds of billions of dollars in critical infrastructure such as data centers and advanced semiconductor chips.

The report highlights a stark reality: the pace of AI development is outstripping the ability of established systems—benchmarking methodologies, governance frameworks, and labor markets—to keep pace. AI is operating at a sprint, while the rest of society is still lacing up its running shoes, struggling to adapt and comprehend the implications.

Environmental and Supply Chain Strains Emerge

The sheer scale and speed of AI development are not without significant environmental and infrastructural costs. AI data centers globally now consume an estimated 29.6 gigawatts of power, a figure comparable to the peak electricity demand of the entire state of New York. The operational demands of individual advanced models are also coming under scrutiny. For instance, the annual water usage associated with running OpenAI’s GPT-4o alone is estimated to potentially exceed the drinking water needs of 1.2 million people. This raises critical questions about the sustainability of current AI development models and the potential strain on natural resources.

Furthermore, the global supply chain for the specialized chips essential for AI computation presents a notable vulnerability. The United States hosts a significant majority of the world’s AI data centers, yet a single company in Taiwan, TSMC, fabricates nearly all leading AI chips. This concentration of manufacturing capacity introduces a significant risk of disruption, underscoring the geopolitical and economic fragility embedded within the AI ecosystem.

The US and China: A Tightening Race for AI Supremacy

The 2026 AI Index reveals a fiercely competitive landscape between the United States and China in the realm of AI model performance, with geopolitical implications of immense significance. According to Arena, a community-driven platform that enables direct comparison of large language model outputs on identical prompts, the two nations are nearly indistinguishable at the cutting edge.

While OpenAI’s ChatGPT held a notable lead in early 2023, this advantage has been steadily eroded by the release of competing models from Google and Anthropic. By February 2025, R1, an AI model developed by the Chinese lab DeepSeek, briefly achieved parity with the leading US model. As of March 2026, Anthropic currently holds a narrow lead, with xAI, Google, and OpenAI closely following. Chinese models, including DeepSeek and Alibaba’s offerings, are trailing only marginally. This intense competition has shifted the focus from raw performance metrics to critical factors such as cost-effectiveness, reliability, and practical real-world utility.

The report delineates distinct strengths for each nation. The United States boasts more powerful AI models, greater access to capital, and an estimated 5,427 data centers, more than ten times the number in any other country. Conversely, China leads in AI research publications, patent filings, and advancements in robotics.

This escalating rivalry has led to a concerning trend of reduced transparency. Leading AI companies, including OpenAI, Anthropic, and Google, are increasingly withholding details about their training code, parameter counts, and dataset sizes. This lack of openness, as noted by Yolanda Gil, a computer scientist at the University of Southern California and co-author of the report, impedes independent researchers’ ability to thoroughly study and address the safety implications of these powerful models. "We don’t know a lot of things about predicting model behaviors," Gil stated, emphasizing the challenges this opacity creates for ensuring AI safety.

AI Models Demonstrate Remarkable, Yet Inconsistent, Progress

The AI Index paints a picture of a technology that continues to defy expectations of stagnation. By several key metrics, AI models now rival or surpass human expert performance in areas such as advanced science, mathematics, and language comprehension, tasks typically associated with doctoral-level expertise. The SWE-bench Verified benchmark, which assesses AI models in software engineering, has seen top scores surge from approximately 60% in 2024 to nearly 100% in 2025. In a significant development, an AI system independently produced a weather forecast in 2025, demonstrating an emerging capacity for complex, real-world task execution.

"I am stunned that this technology continues to improve, and it’s just not plateauing in any way," commented Gil on the relentless upward trajectory of AI capabilities.

However, this progress is not uniform. AI exhibits what is termed "jagged intelligence," excelling in data-driven tasks but struggling with real-world embodiment and nuanced understanding. Robots, for example, are still in their nascent stages, achieving success in only 12% of household tasks. While self-driving car technology is more mature, with Waymo operating in five US cities and Baidu’s Apollo Go shuttling passengers in China, AI’s integration into professional fields like law and finance is still evolving, with no single model yet dominating these domains.

The Crisis of AI Benchmarking and Transparency

The impressive performance figures reported by AI developers must be viewed with caution, as the very benchmarks used to measure progress are themselves struggling to keep pace. The Stanford report highlights that many benchmarks are quickly becoming obsolete as AI models "blow past their ceilings." Compounding this issue, some benchmarks are poorly constructed, with a popular mathematics benchmark exhibiting a 42% error rate. Others are susceptible to manipulation, where models can achieve high scores by being trained on test data rather than by genuine improvement in their underlying intelligence.

The disconnect between benchmark performance and real-world efficacy is a significant concern. As the report notes, strong performance on a benchmark does not always translate to dependable real-world application, particularly for complex, interactive AI systems like agents and robots, for which robust benchmarks are largely absent.

The increasing lack of transparency from AI companies further complicates independent assessment. Many organizations are reportedly withholding results from responsible AI benchmarks, leading to suspicions that their models may not perform as well in these critical areas. "A lot of companies are not releasing how their models do in certain benchmarks, particularly the responsible-AI benchmarks," Gil observed. "The absence of how your model is doing on a benchmark maybe says something." This opacity hinders the crucial work of ensuring AI is developed and deployed safely and ethically.

The Shifting Sands of the Job Market

Within a remarkably short timeframe of three years since entering the mainstream, AI is now utilized by over half the global population, a rate of adoption exceeding that of the personal computer and the internet. An estimated 88% of organizations have integrated AI into their operations, and a significant four out of five university students are leveraging it in their academic pursuits.

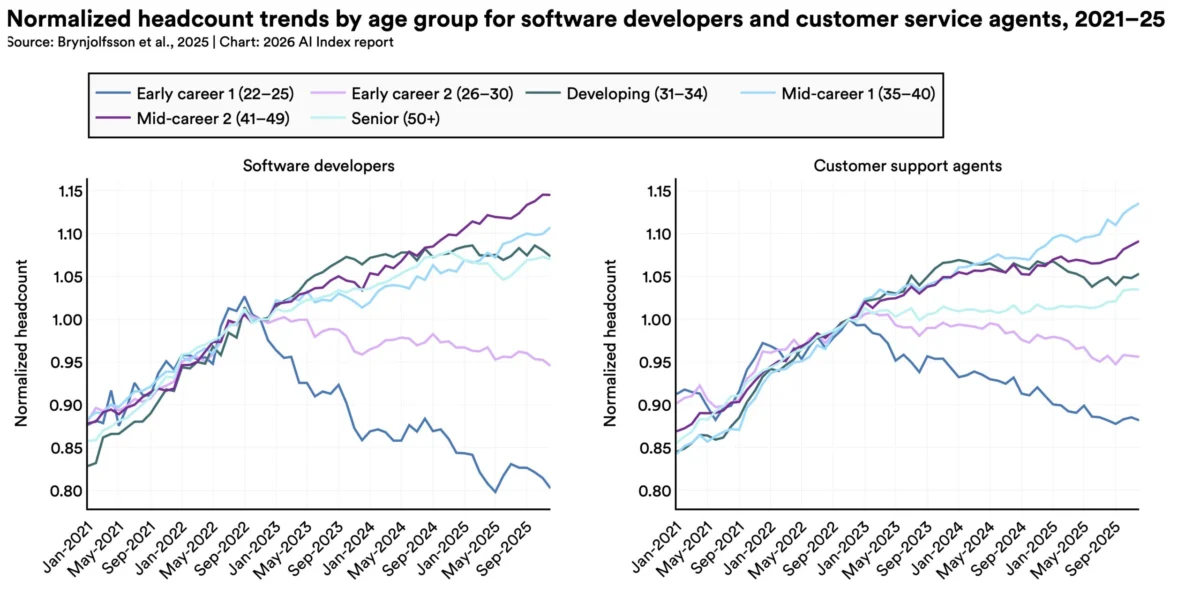

While the full impact of AI on employment is still unfolding, early indications suggest a discernible effect on younger workers in specific professions. Research from Stanford economists in 2025 indicated a nearly 20% decline in employment for software developers aged 22 to 25 since 2022. Although broader macroeconomic factors may also contribute to this trend, AI appears to be a significant contributing element.

The trajectory of hiring may continue to tighten, according to employer surveys. A 2025 McKinsey & Company survey revealed that one-third of organizations anticipate AI will lead to workforce reductions in the coming year, particularly in service, supply chain operations, and software engineering. While AI demonstrably boosts productivity—by 14% in customer service and 26% in software development, according to research cited in the index—these gains are less pronounced in tasks demanding higher levels of judgment. Consequently, the comprehensive economic implications of AI remain a subject of ongoing analysis.

Public Sentiment: A Mix of Optimism and Apprehension

Global public opinion on AI is characterized by a duality of optimism and anxiety. An Ipsos survey cited in the index found that 59% of people believe AI will yield more benefits than drawbacks, while simultaneously, 52% express nervousness about its proliferation.

A notable divergence exists between expert and public perceptions of AI’s future. A Pew survey highlighted a significant gap in expectations regarding the future of work: 73% of AI experts anticipate a positive impact on how people perform their jobs, a sentiment shared by only 23% of the general American public. Experts also exhibit greater optimism regarding AI’s influence on education and healthcare, though both groups concur that AI poses risks to elections and personal relationships.

Americans, in particular, express the lowest trust among surveyed nations in their government’s capacity to effectively regulate AI. More Americans are concerned that federal AI regulation will be insufficient rather than overly stringent.

Governments Grapple with AI Regulation

Governments worldwide are finding it challenging to establish effective regulatory frameworks for AI, though some progress was observed in the past year. The European Union’s AI Act saw its initial prohibitions take effect, banning the use of AI in predictive policing and emotion recognition. Japan, South Korea, and Italy also enacted national AI legislation. In contrast, the US federal government has leaned towards deregulation, with President Trump issuing an executive order aimed at limiting state-level AI regulation.

Despite this federal stance, US state legislatures have been more active, passing a record 150 AI-related bills. California enacted landmark legislation, including SB 53, which mandates safety disclosures and whistleblower protections for AI model developers. New York’s RAISE Act requires AI companies to publish safety protocols and report critical safety incidents.

However, the sheer volume of legislative activity is not necessarily keeping pace with the technology’s rapid evolution. "Governments are cautious to regulate AI because… we don’t understand many things very well," stated Gil. "We don’t have a good handle on those systems." This fundamental lack of understanding poses a significant hurdle to developing comprehensive and effective AI governance.

The 2026 AI Index serves as a crucial, albeit complex, snapshot of a technology that is rapidly reshaping our world. It underscores the urgent need for a more coordinated, transparent, and sustainable approach to AI development, deployment, and governance to harness its potential while mitigating its risks.