MareNostrum V: A Glimpse Inside the 200 Million Euro Supercomputer Revolutionizing Scientific Discovery

Nestled within the picturesque campus of the Polytechnic University of Catalonia in Barcelona, the historic Torre Girona chapel stands as a testament to 19th-century architectural grandeur, featuring a prominent cross, soaring arches, and vibrant stained glass. Yet, within its main hall, a modern marvel is preserved: the original 2004 racks of the MareNostrum supercomputer, now a museum piece. Adjacent to this historical landmark, a dedicated, climate-controlled facility houses the latest iteration, MareNostrum V, a formidable machine that ranks among the fifteen most powerful supercomputers globally. This article delves into the architecture, operational realities, and profound implications of MareNostrum V, offering an insider’s perspective on interacting with a 200-million-euro computational powerhouse.

The transition from conventional cloud computing, such as leveraging Amazon Web Services’ EC2 instances or distributed frameworks like Spark and Ray, to the realm of High-Performance Computing (HPC) at the supercomputer level represents a paradigm shift. MareNostrum V operates under distinct architectural principles, employs specialized schedulers, and functions on a scale that is challenging to fully comprehend until experienced firsthand. The author recently utilized MareNostrum V for an extensive project generating synthetic data crucial for a machine learning surrogate model, providing a unique opportunity to explore the inner workings of this advanced system.

The Unseen Foundation: Rethinking Network Architecture

A fundamental misconception often arises when approaching HPC: users are not merely accessing a singular, exceptionally powerful computer. Instead, they are submitting tasks that are meticulously distributed across thousands of independent computing nodes, interconnected by an ultra-high-speed network. For data scientists accustomed to distributed computing, the performance of this network is paramount. As evidenced by the frustration of watching expensive GPUs lie idle during lengthy data transfers when training massive neural networks across multiple cloud instances, it becomes clear that in distributed environments, "the network is the computer."

To circumvent these critical bottlenecks, MareNostrum V employs an advanced InfiniBand NDR200 fabric. This high-speed interconnect is configured in a sophisticated "fat-tree" topology. Unlike conventional office networks where bandwidth can become congested as numerous devices attempt to communicate through a central switch, the fat-tree design proactively addresses this issue. It achieves this by progressively increasing the bandwidth of network links as they ascend the hierarchy, akin to thickening branches closer to the main trunk of a tree. This design guarantees non-blocking bandwidth, ensuring that any of the system’s 8,000 nodes can communicate with any other node with minimal and consistent latency. This intricate networking infrastructure is a joint investment from the EuroHPC Joint Undertaking, Spain, Portugal, and Turkey, underscoring the collaborative effort to advance European supercomputing capabilities.

A Dual-Core Powerhouse: General Purpose and Accelerated Computing

MareNostrum V is strategically divided into two primary computational partitions, each engineered for specific workloads:

General Purpose Partition (GPP)

This segment is optimized for highly parallelized CPU-intensive tasks. It comprises 6,408 nodes, each equipped with 112 Intel Sapphire Rapids cores. Collectively, this partition boasts a peak performance of 45.9 Petaflops (quadrillions of floating-point operations per second), making it the primary workhorse for a wide array of "general" computing demands.

Accelerated Partition (ACC)

Tailored for specialized applications such as AI training, molecular dynamics simulations, and other computationally intensive workloads, the Accelerated Partition features 1,120 nodes. Each of these nodes is outfitted with four NVIDIA H100 SXM GPUs. The sheer scale of the GPU investment is staggering; considering that a single H100 GPU retails for approximately $25,000, the cost of GPUs alone for this partition exceeds $110 million. This specialized hardware endows the ACC with a significantly higher peak performance, reaching an impressive 260 Petaflops, far surpassing that of the GPP.

Integral to the system’s operation are the Login Nodes. These serve as the primary entry point for users. When an individual connects to MareNostrum V via SSH, they first land on a login node. These nodes are designated for lightweight operations exclusively, including file transfers, code compilation, and the submission of job scripts to the scheduler. They are not intended for computational processing.

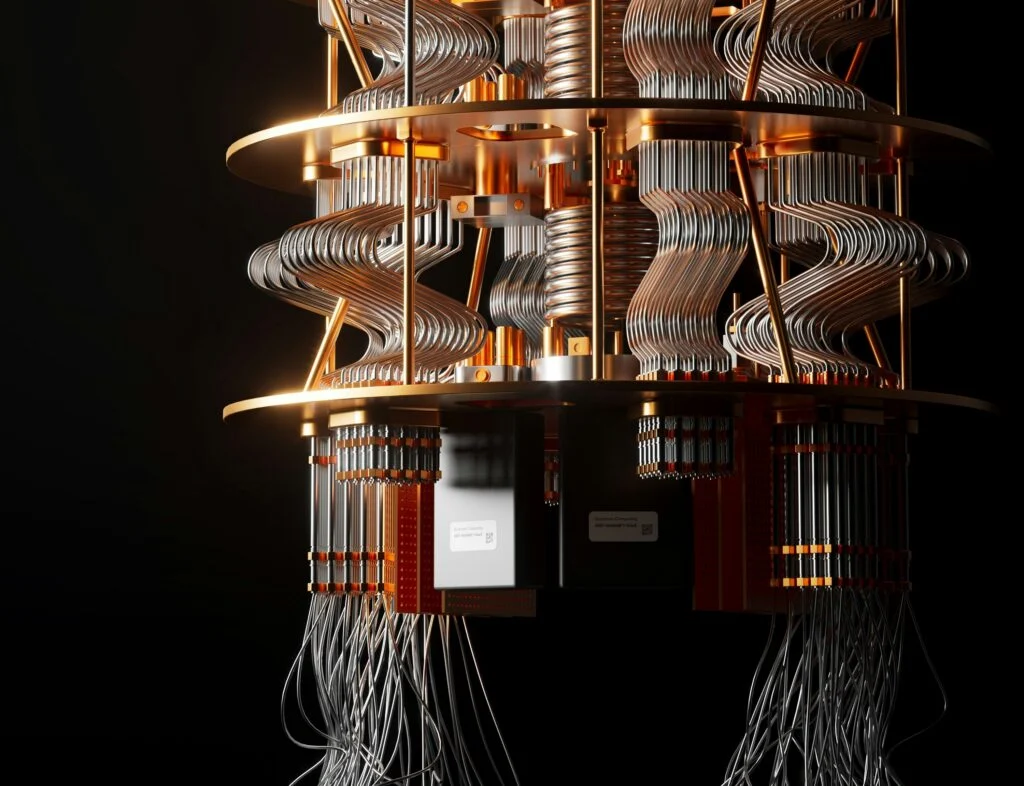

The Dawn of Quantum Integration: A Hybrid Computing Frontier

Beyond its classical computing architecture, MareNostrum V is pioneering the integration of quantum computing. The system has been physically and logically linked with Spain’s nascent quantum computing infrastructure. This integration includes a gate-based quantum system and the recently acquired MareNostrum-Ona, a state-of-the-art quantum annealer leveraging superconducting qubits. These Quantum Processing Units (QPUs) do not replace the classical supercomputer; rather, they function as highly specialized accelerators. When MareNostrum V encounters exceptionally complex optimization problems or quantum chemistry simulations that would overwhelm even its powerful H100 GPUs, it can offload these specific calculations to the quantum hardware. This synergy creates a formidable hybrid classical-quantum computing powerhouse, pushing the boundaries of scientific research.

Navigating the HPC Landscape: Airgaps, Quotas, and Operational Realities

Understanding the hardware is only one facet of mastering HPC. The operational protocols of a supercomputer diverge significantly from those of commercial cloud providers. MareNostrum V, as a publicly funded shared resource, operates under stringent security measures and fair-use policies.

The Unyielding Airgap

One of the most striking adjustments for data scientists transitioning to HPC is the strict network isolation. While external access is possible via SSH, the compute nodes themselves are completely cut off from the public internet. There is no outbound connection, meaning users cannot directly install libraries with pip install, download datasets with wget, or connect to external repositories like HuggingFace. All necessary software, libraries, and datasets must be pre-downloaded, compiled, and staged within the user’s designated storage directory before a job can be submitted. Fortunately, MareNostrum administrators mitigate this challenge by providing a comprehensive suite of commonly used libraries and software through a convenient module system.

Data Flow and Bottlenecks

Data ingress and egress are managed through the login nodes using secure copy (scp) or remote synchronization (rsync) protocols. Raw datasets are uploaded via SSH, then processed by the compute nodes. The processed results are subsequently downloaded back to the user’s local machine for post-processing and visualization. A surprising consequence of this strict data boundary is that, given the immense computational speed of the system, the bottleneck can shift to the extraction of finished results to the local machine.

Limits and Quotas: The Discipline of Resource Management

Unrestricted access is not feasible. MareNostrum V operates on a system of quotas and limits to ensure equitable resource allocation among its numerous users. Each project is assigned a specific CPU-hour budget, and hard limits are imposed on the number of concurrent jobs a single user can run or queue. Furthermore, every submitted job must specify a strict "wall-time" limit. Supercomputers do not tolerate delays; if a job exceeds its allocated time, the scheduler will ruthlessly terminate the process to free up resources for the next researcher.

Silent Execution: Logging in the Dark

The nature of job submission to a scheduler means there is no live terminal output to monitor. All standard output (stdout) and standard error (stderr) are automatically redirected into log files. Upon job completion or failure, users must meticulously review these text files to verify results or debug their code. Tools like squeue and the ability to tail -f log files provide essential visibility into job status and progress.

Mastering the Command Line: The SLURM Workload Manager

Upon successful application for research allocation and subsequent SSH login to MareNostrum V, users are greeted by a standard Linux terminal prompt. Despite the immense computational power behind this interface, there are no ostentatious displays or graphical indicators of its capabilities. This understated appearance belies the sophisticated system orchestrating its operations.

Directly executing computationally intensive Python or C++ scripts on a login node is strictly prohibited. Such actions would overload the login node, impacting other users and invariably leading to a stern communication from the system administrators. Instead, High-Performance Computing relies on a workload manager known as SLURM (Simple Linux Utility for Resource Management). SLURM is an open-source software responsible for scheduling jobs across numerous computer clusters and supercomputers.

Users interact with SLURM by creating a bash script that specifies their resource requirements, including the desired hardware, software environments, and the code to be executed. SLURM then places the job in a queue, allocates the necessary hardware when available, executes the code, and releases the nodes upon completion.

Communication with the SLURM scheduler is facilitated through #SBATCH directives embedded at the top of the submission script. These directives function as a detailed request for resources, akin to a shopping list for the supercomputer. Essential directives include:

#SBATCH --job-name: Assigns a name to the job for identification.#SBATCH --output: Specifies the file for standard output.#SBATCH --error: Designates the file for standard error messages.#SBATCH --time: Sets the maximum execution time for the job.#SBATCH --nodes: Defines the number of compute nodes required.#SBATCH --ntasks: Specifies the number of tasks (processes) to run.#SBATCH --cpus-per-task: Indicates the number of CPU cores per task.#SBATCH --mem: Sets the memory allocation per node.#SBATCH --partition: Selects the specific compute partition (e.g., GPP or ACC).#SBATCH --account: Specifies the project account for billing and tracking.

A Practical Application: Orchestrating a Computational Fluid Dynamics Sweep

To illustrate the practical application of MareNostrum V, consider a scenario involving the creation of a machine learning surrogate model for predicting aerodynamic downforce. This task necessitated the generation of ground-truth data from 50 high-fidelity Computational Fluid Dynamics (CFD) simulations, each performed on a distinct 3D mesh.

A typical SLURM job script for a single OpenFOAM CFD case on the General Purpose Partition would look like this:

#!/bin/bash

#SBATCH --job-name=cfd_sweep

#SBATCH --output=logs/sim_%j.out

#SBATCH --error=logs/sim_%j.err

#SBATCH --qos=gp_debug

#SBATCH --time=00:30:00

#SBATCH --nodes=1

#SBATCH --ntasks=6

#SBATCH --account=nct_293

module purge

module load OpenFOAM/11-foss-2023a

source $FOAM_BASH

# MPI launchers handle core mapping automatically

srun --mpi=pmix surfaceFeatureExtract

srun --mpi=pmix blockMesh

srun --mpi=pmix decomposePar -force

srun --mpi=pmix snappyHexMesh -parallel -overwrite

srun --mpi=pmix potentialFoam -parallel

srun --mpi=pmix simpleFoam -parallel

srun --mpi=pmix reconstructParInstead of manually submitting each of the 50 jobs, which would overwhelm the scheduler, a more efficient approach involves leveraging SLURM’s dependency management features to chain jobs sequentially. This creates a streamlined, automated data pipeline:

#!/bin/bash

PREV_JOB_ID=""

for CASE_DIR in cases/case_*; do

cd $CASE_DIR

if [ -z "$PREV_JOB_ID" ]; then

OUT=$(sbatch run_all.sh)

else

OUT=$(sbatch --dependency=afterany:$PREV_JOB_ID run_all.sh)

fi

PREV_JOB_ID=$(echo $OUT | awk 'print $4')

cd ../..

doneThis orchestrator script submits a chain of 50 jobs to the queue within seconds. The researcher can then leave the system to process, and by the following morning, all 50 aerodynamic evaluations are completed, logged, and prepared for tensor conversion for machine learning training.

The Constraints of Parallelism: Understanding Amdahl’s Law

A common query from newcomers to HPC concerns the seemingly low number of tasks requested relative to the available cores. For instance, why request only 6 tasks (ntasks=6) for a CFD simulation when a node possesses 112 cores? The answer lies in Amdahl’s Law.

This fundamental principle posits that the theoretical speedup achievable by parallelizing a program is inherently limited by the fraction of the code that must be executed serially. The law is mathematically expressed as:

[S=frac1(1-p)+fracpN]

Where:

Srepresents the overall speedup.pis the proportion of the code that can be parallelized.1-pis the strictly serial fraction of the code.Nis the number of processing cores.

The (1-p) term in the denominator creates an insurmountable ceiling. If even a small percentage (e.g., 5%) of a program is fundamentally sequential, the maximum theoretical speedup is capped, regardless of the number of cores employed. Furthermore, dividing a task across an excessive number of cores can amplify communication overheads. If cores spend more time exchanging data than performing computations, adding more hardware can paradoxically slow down the program.

As illustrated by performance graphs, for small-scale simulations, runtime can increase beyond a certain thread count. Only at massive scales do the hardware resources become fully productive. Writing efficient code for a supercomputer thus becomes an exercise in meticulously managing the compute-to-communication ratio.

Accessing the Power: Publicly Funded Research Resources

Despite its substantial cost, access to MareNostrum V is provided free of charge to researchers, with compute time treated as a publicly funded scientific resource. Researchers affiliated with Spanish institutions can apply through the Spanish Supercomputing Network (RES). For those across Europe, the EuroHPC Joint Undertaking regularly hosts access calls. Their "Development Access" track is particularly beneficial for data scientists, offering a pathway for porting code or benchmarking machine learning models.

The seemingly ordinary SSH prompt that greets researchers upon login belies the extraordinary infrastructure it connects to: 8,000 interconnected nodes, an InfiniBand fabric transmitting data at 200 Gb/s, and a sophisticated scheduler coordinating hundreds of concurrent jobs from researchers across multiple nations. The persistent mental model of a "single powerful computer" often overshadows the distributed reality that underpins modern computing. This distributed nature, far from being inaccessible, is remarkably more attainable than many realize, enabling groundbreaking scientific endeavors.